Researching the politics of development

Projects

The political economy of agency-level governance and performance in South Africa: A preliminary round of comparative case studies

Objectives

The goals of this project are two-fold:

- to explain why individual public agencies perform the way they do; and

- in light of that explanation, to suggest possible ways of improving agency performance in specific country contexts.

Cases

Three South African infrastructural agencies are used to illustrate how the project’s empirical strategy might be applied: the agency responsible for urban passenger rail (PRASA/Metrorail); the South African National Roads Authority (SANRAL); and the country’s electricity supply parastatal (ESKOM).

Which of the competing hypotheses as to the political drivers of agency performance outlined below prevails in practice is an empirical question. Given the ‘mixed’ character of South Africa’s political settlement, the answer may vary from agency to agency – with the observed empirical patterns offering insight into the character of the political settlement.

Hypotheses and empirical questions

The empirical propositions to be explored are as follows:

- Hypothesis 1: The performance of a public agency is a function of the de facto objectives set, monitored and enforced by the agency’s de facto principals.

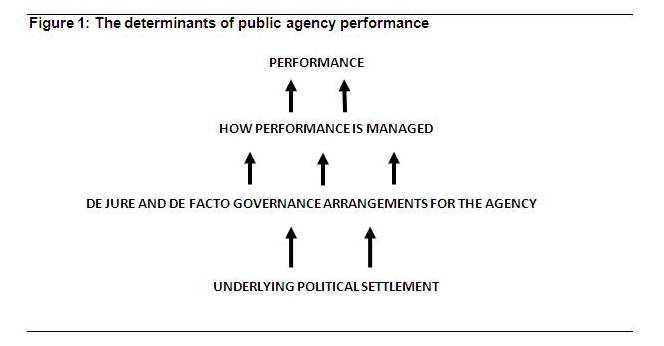

- Hypothesis 2: The ways in which these de facto objectives are set is a function of the political settlement which prevails in a specific country context. (see Figure 1 for illustration)

The first two steps in an empirical assessment of these hypotheses are:

- Empirical question 1: To describe the ‘left hand side’ variable – i.e. what has been the agency’s performance over time?[1]

- Empirical question 2: To what extent have the agency’s managers and overseers managed for performance? How? How has the commitment to performance management shifted over time?

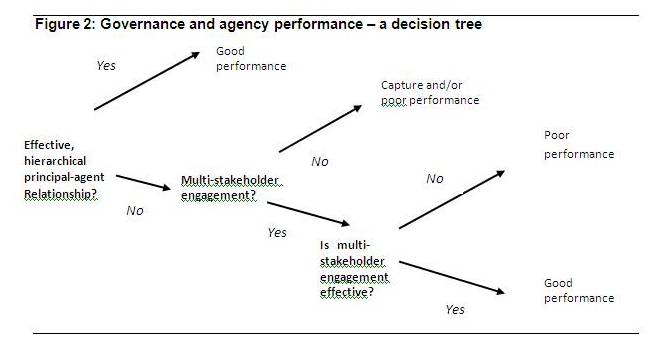

As the decision tree in Figure 2 suggests, two very different sets of institutional arrangements are hypothesised to potentially be consistent with good performance[2]:

- Hypothesis 3a: Strong performance could be a consequence of a well functioning, hierarchical principal-agent relationship, underpinned by strong performance management – both the agency’s management, and by the principals responsible for oversight of the agency. Alternatively,

- Hypothesis 3b: Governance could be more complex, involving multiple stakeholders – but with the stakeholders collaborating effectively to set, monitor and enforce clear goals for the agency.

These divergent relationships between governance and performance point to a further set of governance-related empirical questions:

- Empirical question 3: What are the formal governance arrangements through which agency goals are met, and performance monitored? How have these changed over time?

- Empirical question 4: What were the de facto objectives of each of: (i) agency management? (ii) the agency’s oversight board? and (iii) the agency’s controlling ministry? How have these changed over time?

- Empirical question 5: Based on the actual behaviour of the agency, what was the relative influence of each of these formal stakeholders and, thus, what was the relative weight of each of their objectives in shaping agency behaviour? How did this change over time? [Pay careful attention to the extent to which trade-offs among competing objectives were made explicit, and their relative weights clarified.]

- Empirical question 6: Which external/informal stakeholders have an interest in agency performance? How, if at all, did these stakeholders endeavour to exert their influence? With what effect? How did this change over time?

Methodology

The methodology takes the form of a set of comparative case studies of individual agencies. To account for divergent patterns of performance, each case study incorporates:

- A detailed review of trends in agency performance over time.

- Detailed description, based upon primary and secondary materials, of evolving rules of the game with respect to the oversight arrangements for the agency – both formal and (as per points below, informal).

- Identification of specific unexpected (‘newsworthy’) aspects of performance, and specific controversies associated with the agency over the period; and

- Careful process tracing of key decisions and actions associated with point 3 (including ongoing operational and performance monitoring routines), as a way of identifying who were the key relevant actors, what were their incentives and behaviours, how they interacted with one another, and why the result emerged the way it did.

How does this project fit within ESID’s research agenda?

These case studies form part of a larger, multi-country comparative analysis, falling within ESID’s programme 3, ‘the politics of social provisioning’.

Researchers

| Role | Name | Location |

|---|---|---|

| Lead Researcher | Brian Levy | University of Cape Town, South Africa and Johns Hopkins University |

| Researcher | Chipo Hamukoma | University of Cape Town, South Africa |

| Researcher | Alex de Jager | University of Cape Town, South Africa |